Only need 2 iterations: 5 candidates for the first iteration, thenĥ // 2 = 2 candidates at the second iteration, after which we know whichĬandidate performs the best (so we don’t need a third one). For example if we start with 5 candidates, we Iteration might use less than 640 samples, which means not using all theĪvailable resources (samples).

Iterations: if we start with a small number of candidates, the last īut depending on the number of candidates, we might run less than 7 In theory, with min_resources=10 and factor=2, weĪre able to run at most 7 iterations with the following number of In HalvingRandomSearchCV, and is determined from the param_gridĬonsider a case where the resource is the number of samples, and where we The number of candidates is specified directly

Min_resources is the amount of resources allocated at the first Number of candidates (or parameter combinations) that are evaluated. Successive halving search are the min_resources parameter, and the Choosing min_resources and the number of candidates ¶īeside factor, the two main parameters that influence the behaviour of a > # explicitly require this experimental feature > from sklearn.experimental import enable_halving_search_cv # noqa > # now you can import normally from model_selection > from sklearn.model_selection import HalvingGridSearchCV > from sklearn.model_selection import HalvingRandomSearchCVĬomparison between grid search and successive halvingģ.2.3.1. Need to explicitly import enable_halving_search_cv: These estimators are still experimental: their predictionsĪnd their API might change without any deprecation cycle.

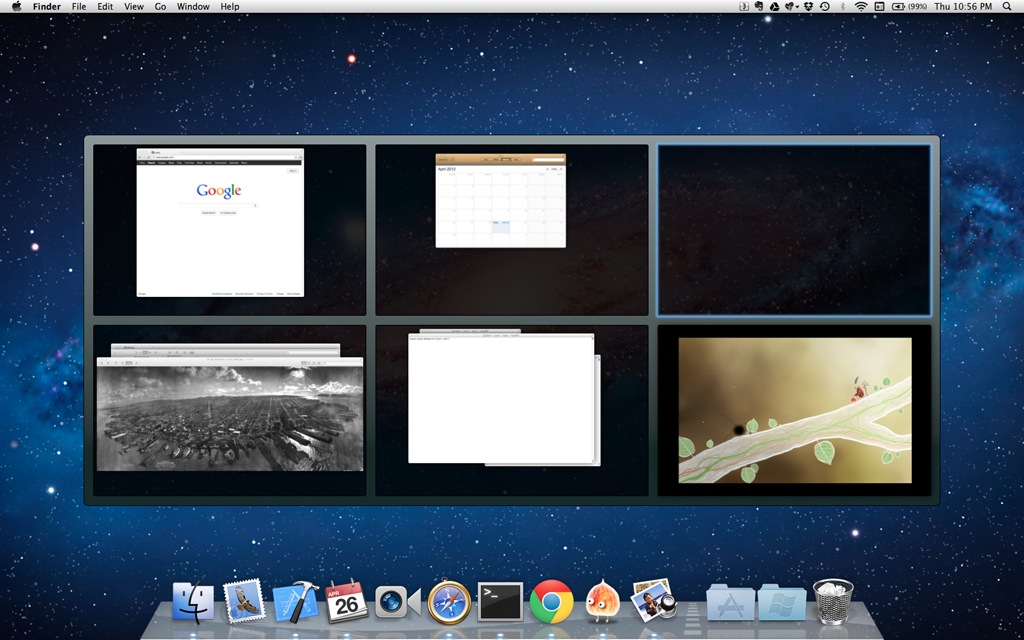

Is available through tuning the min_resources parameter. aggressive_elimination=TrueĬan also be used if the number of available resources is small. HalvingGridSearchCV and the number of candidates (by default) and Search in our implementation, though a value of 3 usually works well.įactor effectively controls the number of iterations in Min_resources, factor is the most important parameter to control the Of candidates is divided by the same factor. Number of resources per candidate is multiplied by factor and the number The rate at which the number of candidates decreases. Theįactor (> 1) parameter controls the rate at which the resources grow, and Interactions are described in more details in the sections below. We here briefly describe the main parameters, but each parameter and their These are the candidates that haveĬonsistently ranked among the top-scoring candidates across all iterations.Įach iteration is allocated an increasing amount of resources per candidate, Resource is typically the number of training samples, but it can also be anĪrbitrary numeric parameter such as n_estimators in a random forest.Īs illustrated in the figure below, only a subset of candidates Iteration, which will be allocated more resources. Only some of these candidates are selected for the next Parameter combinations) are evaluated with a small amount of resources at SH is an iterative selection process where all candidates (the Halving (SH) is like a tournament among candidate parameter combinations. Search a parameter space using successive halving. HalvingRandomSearchCV estimators that can be used to Scikit-learn also provides the HalvingGridSearchCV and Searching for optimal parameters with successive halving ¶ The Journal of Machine Learning Research (2012)ģ.2.3. Random search for hyper-parameter optimization, Param_grid = [ Ĭomparing randomized search and grid search for hyperparameter estimation compares the usage and efficiency The grid search provided by GridSearchCV exhaustively generatesĬandidates from a grid of parameter values specified with the param_grid Possibly by reading the enclosed reference to the literature. The estimator class to get a finer understanding of their expected behavior, It is recommended to read the docstring of Impact on the predictive or computation performance of the model while othersĬan be left to their default values. Note that it is common that a small subset of those parameters can have a large Specialized, efficient parameter search strategies, outlined inĪlternatives to brute force parameter search. Much faster at finding a good parameter combination.Īfter describing these tools we detail best practices applicable to these approaches. HalvingGridSearchCV and HalvingRandomSearchCV, which can be Both these tools have successive halving counterparts Given number of candidates from a parameter space with a specifiedĭistribution. Scikit-learn: for given values, GridSearchCV exhaustively considersĪll parameter combinations, while RandomizedSearchCV can sample a Two generic approaches to parameter search are provided in An estimator (regressor or classifier such as ()) Ī method for searching or sampling candidates

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed